Swift is a type-safe language.

Put another way, it is strict about data types.

When we create a variable, such as:

var age = 16… Swift uses type inference to guess what data type we wanted. In the example above, age is created as an integer.

This means that later on, if we tried to assign age a value of 16.5, we would get an error:

age = 16.5Since Swift is a type-safe language, you cannot assign a Double to a variable that is an Int.

To borrow a metaphor, it is like trying to put a square peg in a round hole:

The same is true for mathematical operations.

Consider this code:

var price = 25

var increase = 2.75

var newPrice = price + increaseThis code will not compile. price is a Int and increase is a Double. Swift will not permit us to add two values held in different data types.

Why, though?

On a technical level, one reason is because integers, whole numbers, are simply stored using a fixed number of bits, as we learned earlier in this module. Decimal values – a Double in Swift – are numbers that represent parts of a whole, and are stored using a much different approach.

Another reason Swift will not permit us to add values held in different data types is because such an operation is unpredictable, especially to new programmers.

Let’s reconsider the code:

var price = 25

var increase = 2.75

var newPrice = price + increaseTo add those two values together, theoretically, Swift could do at least three different things:

- Convert

priceto aDouble, then addincrease, resulting innewPricebeing aDoublewith the value27.75. - Keep

priceas anIntthen convertincreaseinto anIntby dropping the decimal part of the value, resulting innewPricebeing anIntand holding the value27. - Keep

priceas anIntthen convertincreaseinto anIntby rounding up, resulting innewPricebeing anIntand holding the value28.

Some languages, such as Python, do this – this is known as implicit data type conversion.

The challenge is that many programmers forget the specific ways that a given language performs an implicit data type conversion. This can lead to the creation of logical errors, or bugs, in a program or app.

To avoid that problem, Swift requires explicit data type conversion.

In other words, we must tell the Swift compiler exactly what to do.

To add these two values, we can take one of two possible approaches.

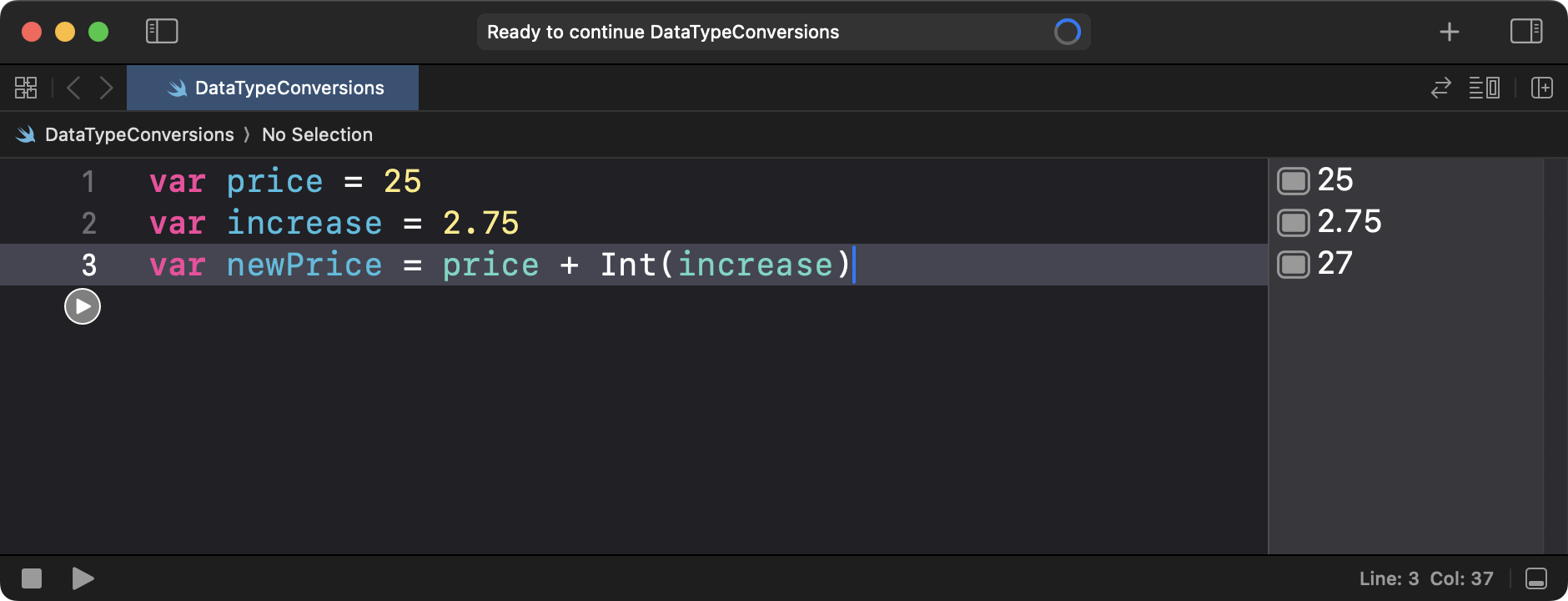

The first approach is to temporarily convert the value held in increase into an Int, resulting in newPrice becoming an Int as well:

var price = 25

var increase = 2.75

var newPrice = price + Int(increase)Take note of what value newPrice holds after the two values are added:

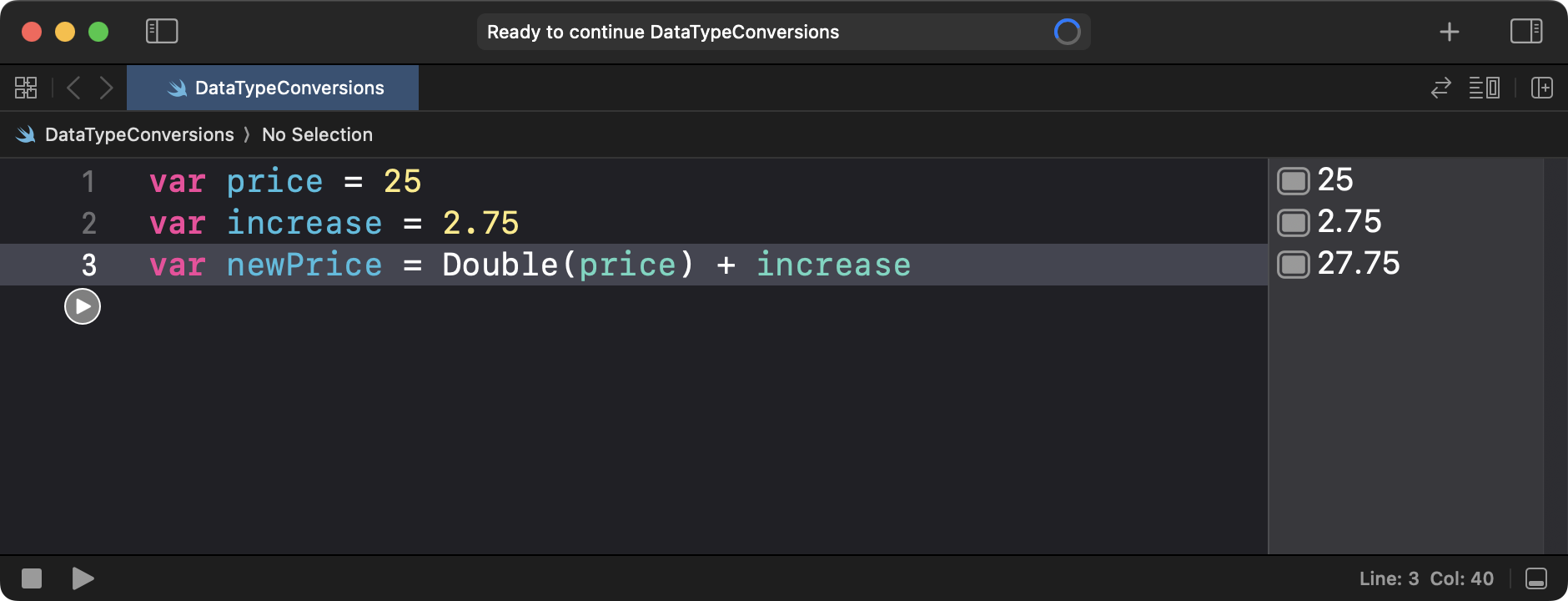

The second approach is to temporarily convert the value held in price into a Double, resulting in newPrice becoming a Double:

var price = 25

var increase = 2.75

var newPrice = Double(price) + increaseTake note of what value newPrice now holds after the two values are added:

In either case – with explicit data type conversions – what is happening is clear to the programmer.

In the first case, newPrice will end up being an integer, or Int. It’s easy to remember that Swift will always drop the decimal portion of a value when converting a Double to an Int – for example, 27.75 becomes 27.

In the second case, newPrice will end up being a decimal value, or Double, and holds 27.75 as its value.

Reflection prompts

- How is explicit data type conversion different than implicit data type conversion?

- What are some possible disadvantages of implicit data type conversions?

- Which approach do you prefer? Implicit data type conversions, as is done in languages like Python, or explicit data type conversions, as is required by languages like Java or Swift?